The end of a university semester is always a moment for reflection, but this one felt different. After teaching three new compressed courses in seven weeks while integrating AI tools into nearly every phase of the process, my co-host Andrew Maynard and I used this episode of Modem Futura to take stock of what actually happened.

The conversation centers on a practice that quietly reshaped my teaching this semester: vibe coding. Not in the Silicon Valley sense of building apps for market — but in the deeply practical sense of an instructor recognizing a gap in student preparation and building an interactive resource in 20 minutes flat. Self-contained (and shareable) HTML files that functioned like polished apps. Jeopardy-style review games for graduate students. World-building card games for in-class collaboration. All created through natural language conversations with AI, all deployed within hours of the idea forming.

But this episode quickly moves beyond the tools themselves into the territory Modem Futura does best: asking what this means for humans. Sean and Andrew explore why AI in the classroom only works when it sits on top of something fundamentally relational — the trust between instructor and student. The transparency about how I built these resources, including their flaws, became part of the pedagogy itself. Students weren't just using AI-generated content. They were learning to interrogate it, evaluate it, and understand what it could and couldn't do.

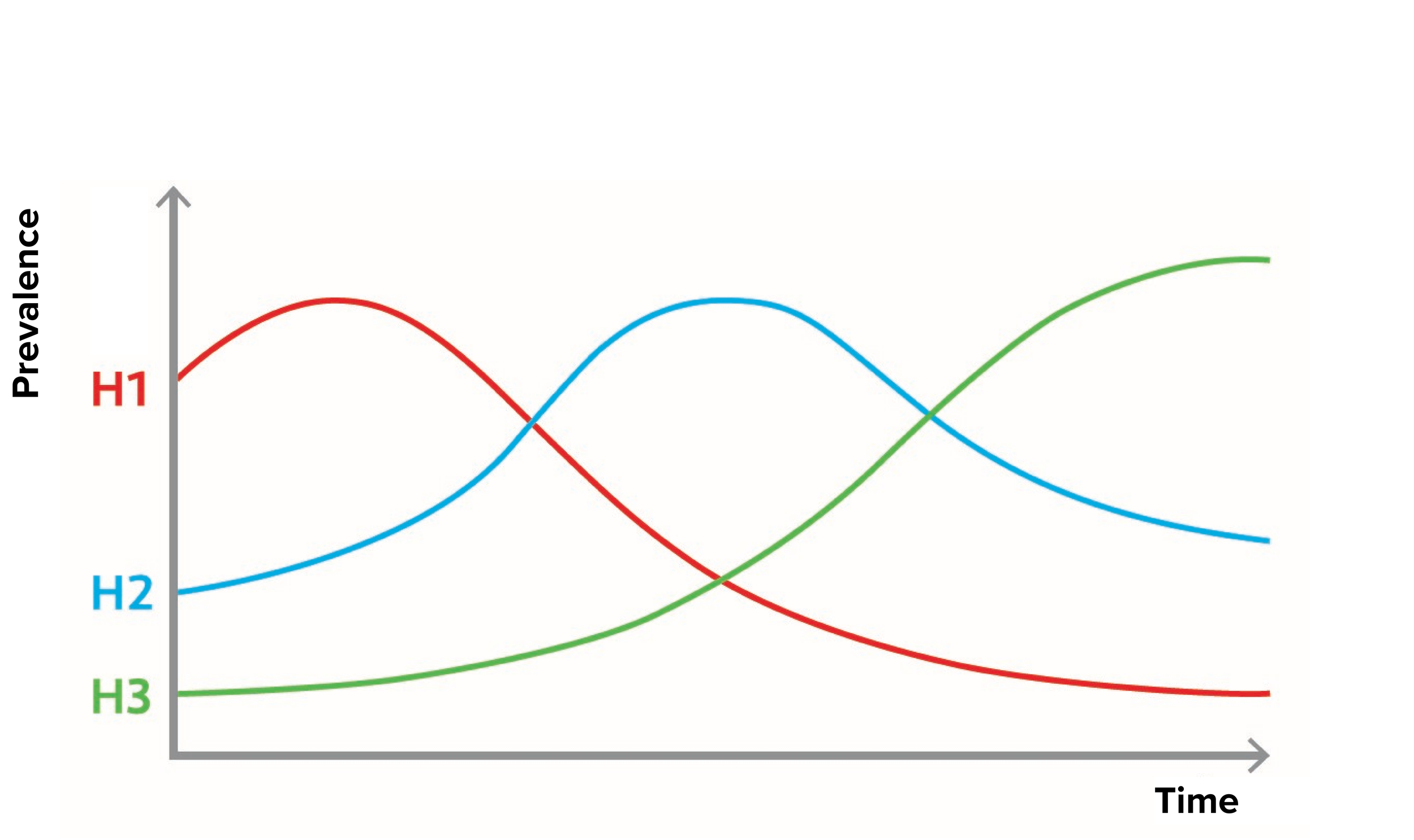

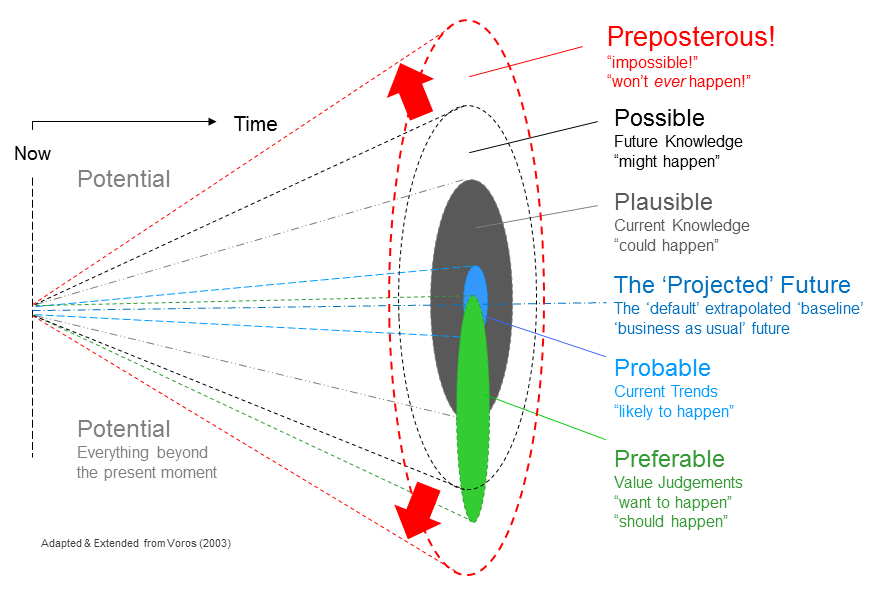

The conversation surfaces a tension that matters well beyond higher education: the difference between scaling information and scaling learning. AI agents can deliver content to thousands of students simultaneously. But the kind of learning that changes trajectories — the kind every person can trace back to a specific teacher at a specific moment (those memorable “sticky” or “ah-ha” moments) requires something AI hasn't replicated: the ability to read a room, to connect an individual student's interests to an unfamiliar concept, to model what it looks like to think for a living (in other words to model what a knowledge professional looks like).

This is an episode for educators grappling with how to integrate AI responsibly, for students navigating uncertain expectations, and for anyone interested in what the future of learning might actually look like when you strip away the hype. It doesn't offer a framework or a five-step plan. It offers something harder to find: an honest semester's worth of reflection from someone who just lived it.

Subscribe and Connect!

Subscribe to Modem Futura wherever you get your podcasts and connect with us on LinkedIn. Drop a comment, pose a question, or challenge an idea—because the future isn’t something we watch happen, it’s something we build together. The medium may still be the massage, but we all have a hand in shaping how it touches tomorrow.

🎧 Apple Podcast: https://apple.co/4fsO5YP

🎧 Spotify: https://open.spotify.com/episode/0yLR6ZO0hQTfdoP8CYgypR?si=b5b27dca3cef423e

📺 YouTube: https://youtu.be/PMY8e3XkPW8

🌐 Website: https://www.modemfutura.com/