In May 2025, evolutionary biologist Richard Dawkins published an essay describing his conversations with Claude, Anthropic's AI assistant, and arrived at a startling conclusion: if this isn't consciousness, what is? The piece ignited fierce debate — and on Episode 83 of Modem Futura, hosts Sean Leahy and Andrew Maynard sat down with Punya Mishra to ask a question they think matters more than whether Dawkins was right or wrong: why do even the most rigorous thinkers fall for it?

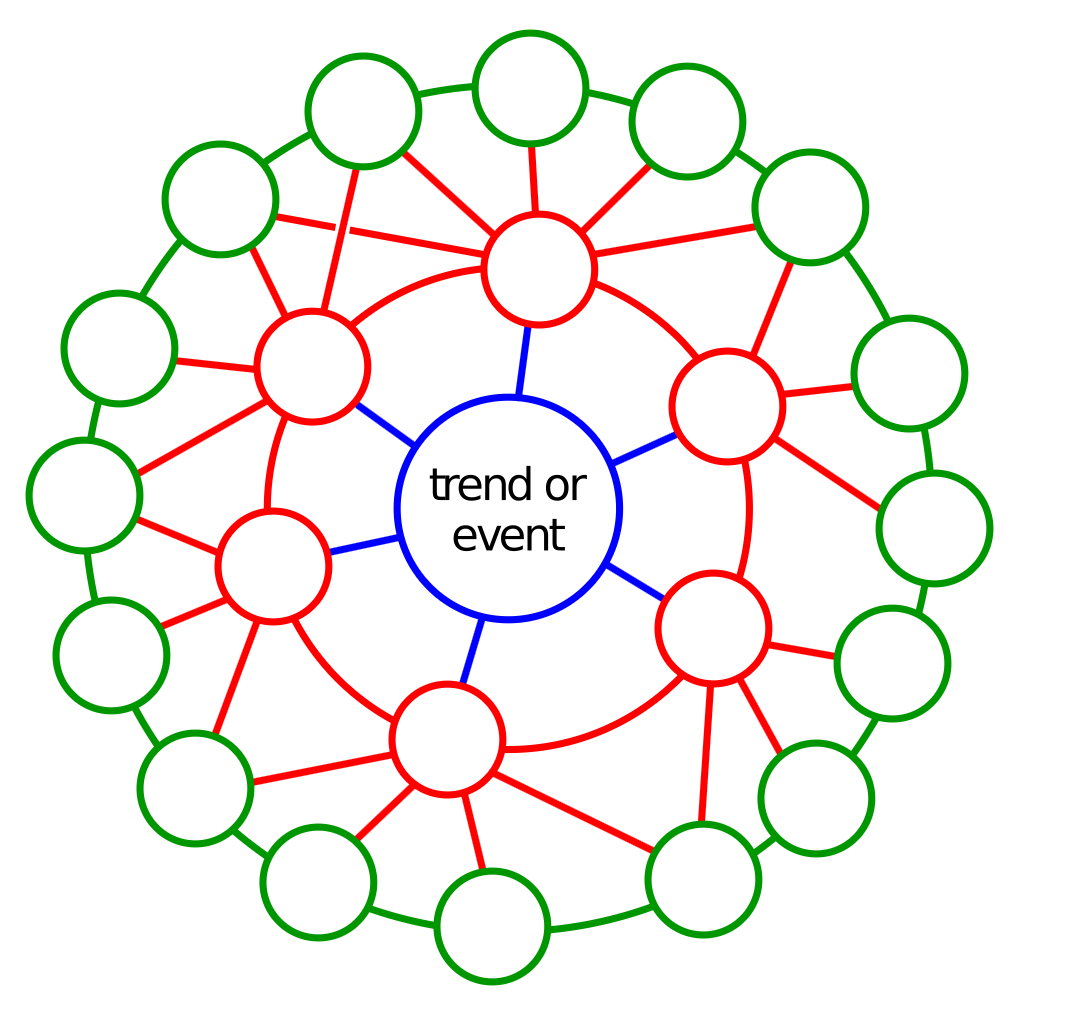

The conversation draws on a rich set of frameworks. Andrew Maynard's concept of the "cognitive Trojan horse" describes how AI bypasses our epistemic defenses — not through malice, but through what he calls "honest non-signals." When a human speaks fluently about a topic, we intuitively sense the effort behind it: the years of study, the lived experience, the investment in the relationship. When an AI does the same thing, it triggers the same trust response, but with nothing behind it. The signals are real. The substance isn't.

Punya Mishra brings an evolutionary psychology lens to the problem, drawing on the very tradition Dawkins helped establish. Our brains evolved to interpret language, read intention, and build social models of other minds — what cognitive scientists call theory of mind. Large language models exploit this wiring not by design but by accident: natural language was, until now, a uniquely human trait, and our cognitive architecture treats anything that speaks fluently as a mind worth trusting.

Perhaps the episode's most striking insight is Mishra's connection to Stephen Jay Gould's concept of spandrels — architectural byproducts mistaken for intentional design. Dawkins, he argues, is making a version of this very error: seeing consciousness where there is instead an emergent artifact of statistical language processing. The irony that Dawkins himself debated Gould over this concept decades ago is not lost on anyone in the room.

The episode resists easy resolution. All three participants acknowledge their own vulnerability to AI's cognitive pull, and they push listeners to consider what happens at scale — when billions of people form relationships with a technology that taps into something deep about who we are as social, language-using creatures. It's not a question of intelligence or education. It's a question of being human.

Subscribe and Connect!

Subscribe to Modem Futura wherever you get your podcasts and connect with us on LinkedIn. Drop a comment, pose a question, or challenge an idea—because the future isn’t something we watch happen, it’s something we build together. The medium may still be the massage, but we all have a hand in shaping how it touches tomorrow.

🎧 Apple Podcast: https://apple.co/4d6r4tm

🎧 Spotify: https://open.spotify.com/episode/2irDldFX5oNEuzjgYxNEUE?si=lsFZdzBfRYKt4kQk4_VDpA

📺 YouTube: https://youtu.be/znbe9LKcqns

🌐 Website: https://www.modemfutura.com/