There's a reason some organizations consistently seem to see disruption coming — and it's usually not because they're smarter or better funded. It's because they've built structured habits of thinking about change in multiple time horizons simultaneously, and they've learned how to trace the cascading consequences of a single shift before it becomes a crisis.

Two of the oldest and most reliable tools for doing exactly that are the Three Horizons Framework and the Futures Wheel. In this episode of Modem Futura, hosts Sean Leahy and Andrew Maynard break both down in accessible, conversational detail — and show what becomes possible when you use them together.

The Three Horizons Framework

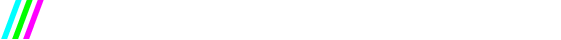

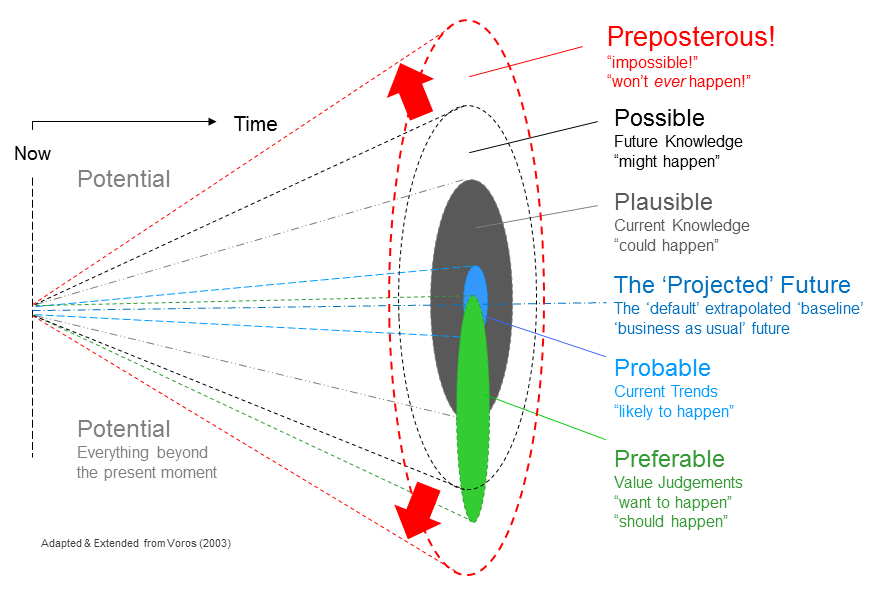

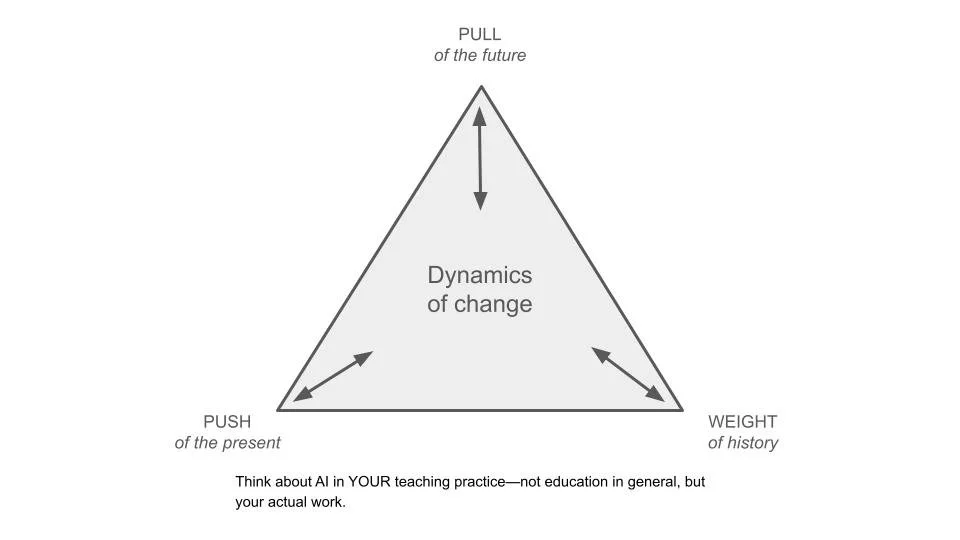

Originally developed by Bill Sharpe and widely used in professional foresight and strategic planning, divides the landscape of change into three overlapping zones. Horizon 1 represents the dominant present — the systems, structures, and assumptions that govern how the world works today. Horizon 3 is the emergent fringe: weak signals, nascent ideas, and early-stage shifts that are observable but not yet mainstream. And Horizon 2 is the transitional space between them — turbulent, hard to define, and full of both opportunity and risk.

The model doesn't tell you what the future will bring. What it offers is a way of *positioning* trends, signals, and innovations in relation to change — helping individuals and organizations understand what to watch, what to act on, and what to prepare for.

The Futures Wheel

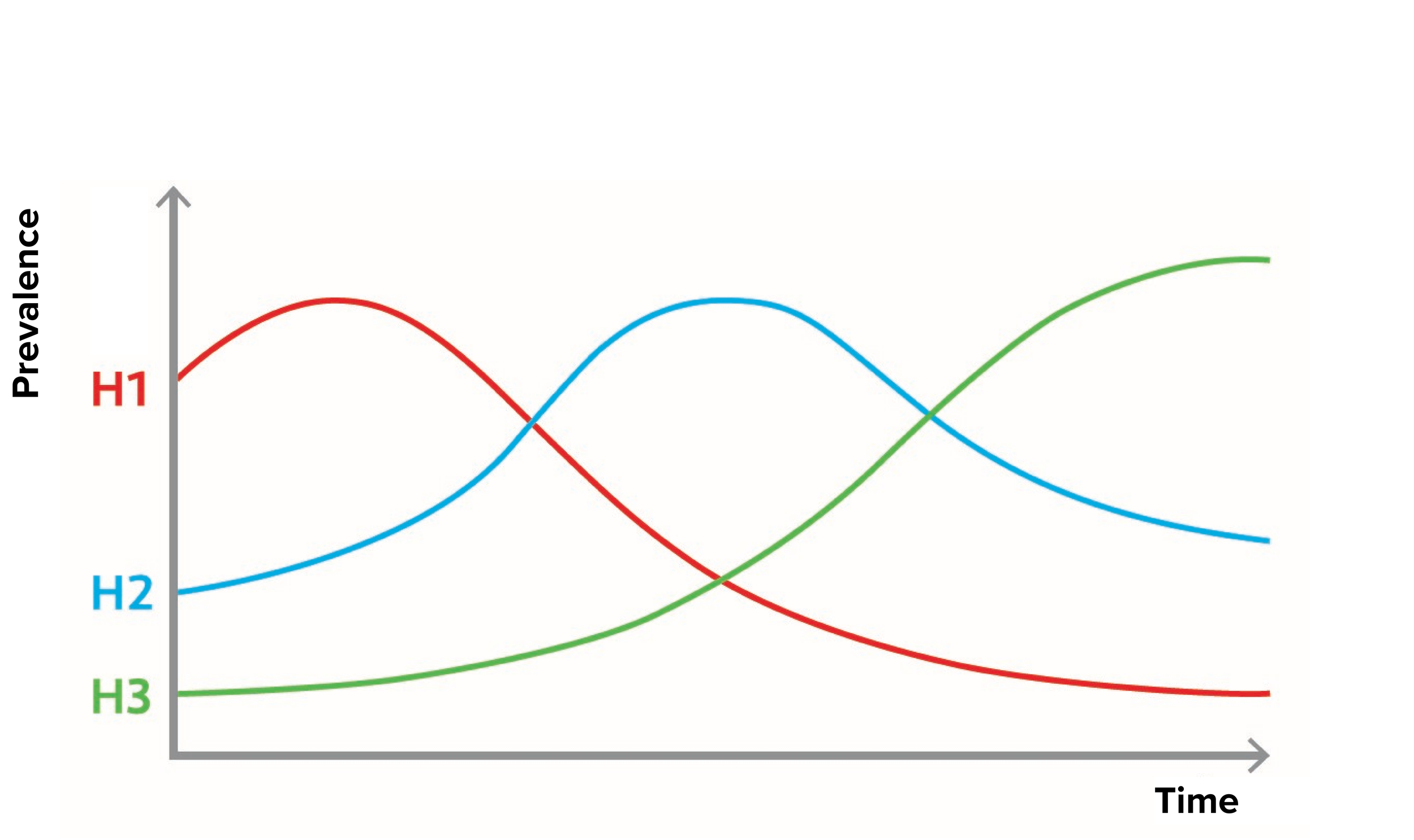

Developed by Jerome Glenn in 1971, works differently but complementarily. Starting from a specific change or trend, it maps outward through first, second, and third-order consequences — building a rich, networked picture of how a single shift might ripple through a system over time. It's a brainstorming and sense-making tool, not a prediction engine, and it's at its most powerful when used with diverse groups who bring different perspectives to the same question.

Used individually, each tool offers genuine insight. Used together, they offer something more: a way of understanding not just *what* a signal might do, but *when* and *through which pathways* it might do it.

Whether you're a founder trying to figure out which wave to ride, a strategist scanning for disruption, or simply someone trying to make better decisions in an uncertain world, these tools are worth adding to your thinking practice.

🎧 Listen to the full episode wherever you get your podcasts, or watch on YouTube.

Subscribe and Connect!

Subscribe to Modem Futura wherever you get your podcasts and connect with us on LinkedIn. Drop a comment, pose a question, or challenge an idea—because the future isn’t something we watch happen, it’s something we build together. The medium may still be the massage, but we all have a hand in shaping how it touches tomorrow.

🎧 Apple Podcast: https://apple.co/4sosMdQ

🎧 Spotify: https://open.spotify.com/episode/58Fdc2SrWodBTbwfxK8Pwm?si=leiCnhRsQxuv-_hnxEeNjQ

📺 YouTube: https://youtu.be/eVk6L_VfAkY

🌐 Website: https://www.modemfutura.com/