The History of our Future

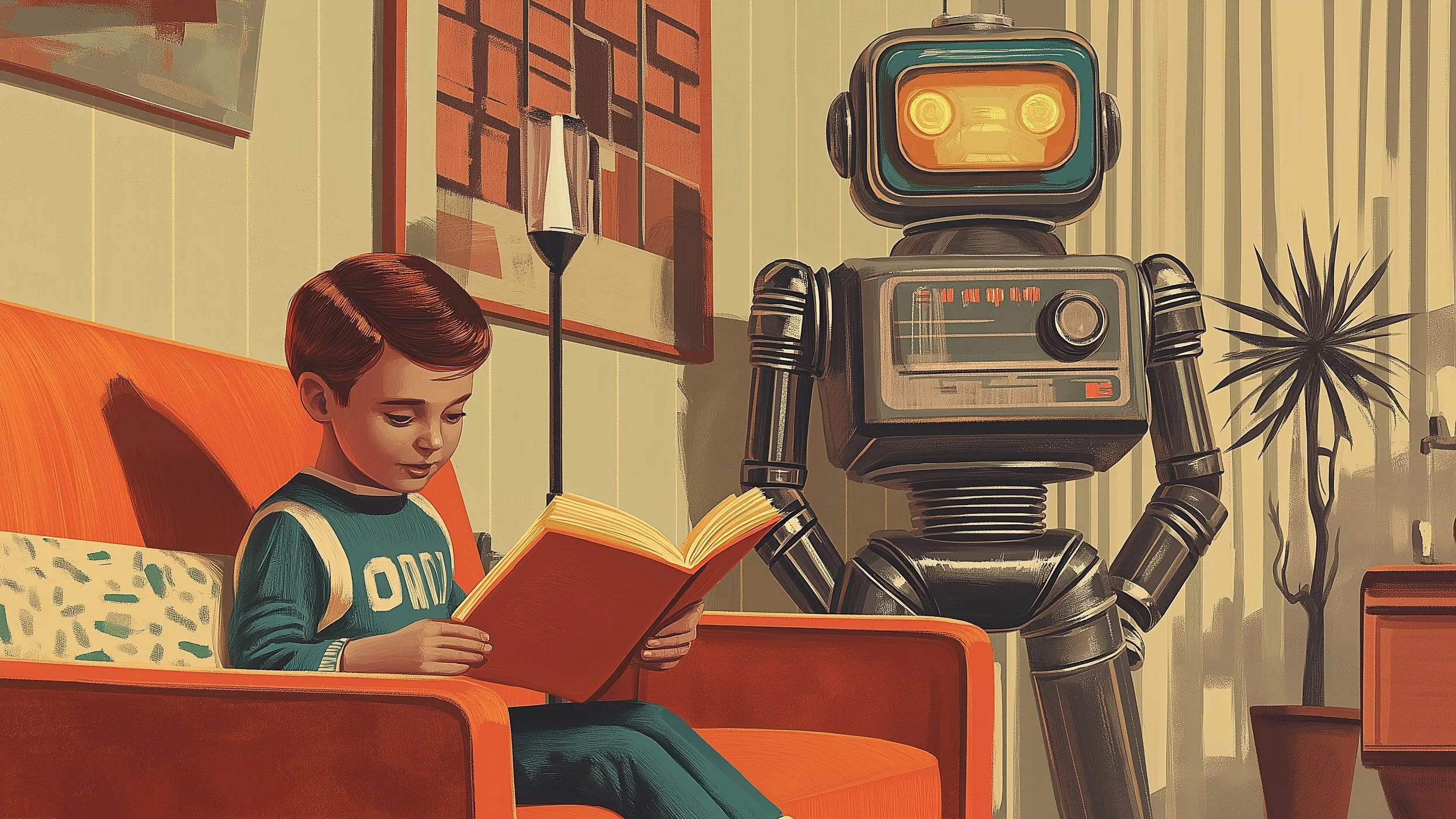

More than seventy years ago, Isaac Asimov imagined a future where children learn in isolation, guided by personalized mechanical tutors, and books are relics of a forgotten age. His 1951 short story, "The Fun They Had," is set in 2155, but its questions feel startlingly current.

In the story, a young girl named Margie discovers a paper book and learns about a time when children went to school together—sat in classrooms, were taught by human teachers, and shared the experience of learning with their peers. Her own education is efficient, personalized, and lonely. Her mechanical teacher can diagnose her struggles and recalibrate its approach, but it cannot inspire her, connect with her, or make her feel like she belongs to something larger than a lesson plan.

“Asimov didn’t predict AI as we know it. But he predicted the question that matters most: in our rush to optimize education, are we designing out the very things that make learning meaningful?

”

This is precisely the tension at the heart of today's conversation about AI in education. The promise of AI-powered tutors is real and, in many cases, genuinely valuable: adaptive pacing, instant feedback, content tailored to individual needs. But when personalization becomes the dominant paradigm—when every learner is on a separate track, in a separate space, at a separate time—the communal dimensions of education begin to disappear.

Natural Human Impulses for Learning (not schooling)

John Dewey argued more than a century ago that learning is driven by four natural impulses: inquiry, communication, construction, and expression. Most of these are inherently social. They depend on friction, dialogue, surprise, and the presence of other people. No amount of algorithmic sophistication can fully replicate the moment a teacher's unexpected enthusiasm shifts a student's entire trajectory, or the experience of working through difficulty alongside peers who share the same struggle.

Asimov's story also raises a subtler question about what endures. The book Margie discovers has survived two centuries. The static words on the page—unchanging, tactile, physical—carry a kind of permanence that digital media cannot easily match. This resonates with the growing cultural appetite for analog experiences: vinyl records, film photography, even old iPods. These are not acts of technological rejection. They are expressions of a deeper need for embodied engagement, deliberate choice, and the kind of friction that gives experience its texture.

Where do we go next?

None of this means AI has no place in education. It does, and increasingly will. But Asimov's story is a quiet reminder that the most important things about learning—curiosity, connection, belonging, the joy of shared discovery—are not problems to be optimized. They are human experiences to be protected.

The question is not whether AI can teach us. It's whether, in building systems that teach us more efficiently, we are designing out the very things that made learning worth having in the first place.

*Episode 71 of Modem Futura explores these themes through Asimov's story and a wider conversation about technology, nostalgia, and what it means to learn as a human being.*

Subscribe and Connect!

Subscribe to Modem Futura wherever you get your podcasts and connect with us on LinkedIn. Drop a comment, pose a question, or challenge an idea—because the future isn’t something we watch happen, it’s something we build together. The medium may still be the massage, but we all have a hand in shaping how it touches tomorrow.

🎧 Apple Podcast: https://apple.co/4s1lDk1

🎧 Spotify: https://open.spotify.com/episode/20I5j2DliUnZAbWDiVw7y8?si=WoEW_Zb2SPiynHYb4d8XHA

📺 YouTube: https://youtu.be/TDQc15Muwto

🌐 Website: https://www.modemfutura.com/